Apple’s ARKit opens new horizons for augmented reality

Apple unveiled the ARKit during WWDC 2017. It’s the company’s set of tools for developers to build augmented reality into their apps.

It leverages the graphics and processing chips inside existing iPads and iPhones, in addition to motion sensors, to allow developers to create apps like Pokemon Go that supplant digital objects on top of the real world.

Read More about About ARKit……….

How does AR work?

In order to understand how augmented reality technology works, we first need to understand its objective, in simple words: “To bring computer-generated objects into the real world and allow real-time interaction”.

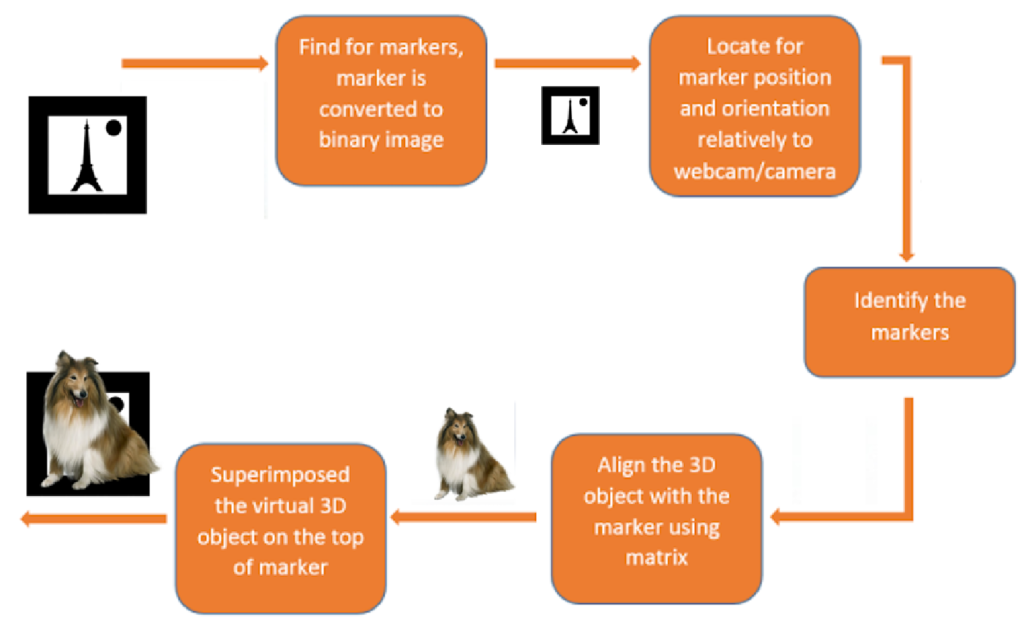

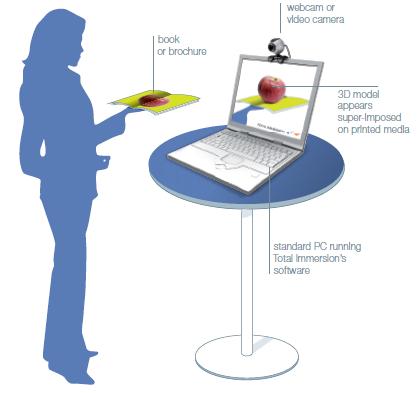

In general, marker-based AR, the device looks for a particular target. This can be anything, 2D image print, real-world objects like a car or human face, generally known as ImageTarget. Once the augmented reality application recognizes the target via the camera it processes the image and augments the virtual content like 3D model, video, image or other media. For example, you may see the movie poster spring to life and play a trailer for the film. As long as you look at the poster through the “window” of the display you can see augmented reality instead of plain old vanilla reality. By using smart algorithms and other sensors such as accelerometers and gyroscopes the device can keep the augmented elements aligned with the image of the real world.

Using a mobile application, a mobile phone’s camera identifies and interprets marker(ImageTarget). The software analyses the marker and creates a virtual image overlay on the mobile phone’s screen, tied to the position of the camera. This means the app works with the camera to interpret the angles and distance the mobile phone is away from the marker.

Due to the number of calculations a phone must do to render the image or model over the marker, often only smartphones are capable of supporting augmented reality with any success. Phones need a camera, and if the AR media to augment is not stored within the app, a good Internet connection.

The real-time video stream requires 3 simple steps

- Recognition: Recognition of an image, an object, a face or a body

- Tracking: Real-time localization in space of the image, object, face, or body

- Mix: Superposition of a media (video, 3D, 2D, text, etc…) on top of this image, object, face or body.

The process of these three steps takes less than 40ms to match the human eye fluidity of 25 images per second. Powerful algorithms need to be applied and research is continuously progressing to further develop each of these three processes boosted by the growing performances of equipment and devices.

Computer Vision is in itself a high consumer of CPU but higher is the available power, better is the sophistication of the algorithm for a truly enhanced user experience. Furthermore, with the rapidly progressing utilization of captors, such as GPS, Compass, gyroscope, thermometers, speedometer, the sum of information collected can be used to further enrich and boost users experience in a current context.

Augmented Reality devices

The type of augmented reality you are most likely to encounter uses a range of sensors (including a camera), computer components and a display device to create the illusion of virtual objects in the real world. Most of the Augmented Reality devices are often self-contained, meaning that unlike the Oculus Rift or HTC Vive VR headsets, they are completely untethered and do not need a cable or desktop computer to function.

Different display devices used in Augmented Reality:

- The head-mounted display (HMD) is worn on the head or attached to a helmet. This display can resemble goggles or glasses. In some instances, there is a screen that covers a single eye.

- The handheld device is a portable computer or mobile smartphone such as the iPhone.

- The spatial display makes use of projected graphical displays onto fixed surfaces.

Key Components of Augmented Reality Devices:

- Sensors and Cameras:

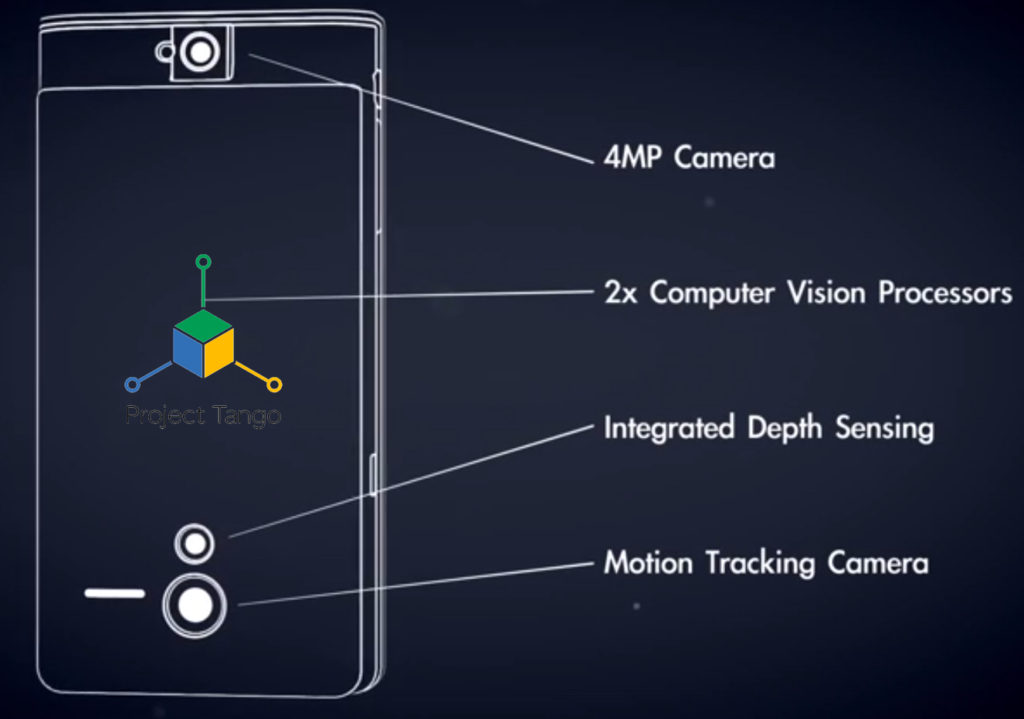

Sensors gather a user’s real-world interactions and communicate them to be processed and interpreted. Cameras are located on the outside of the device, and visually scan to collect data about the surrounding area. The devices take this information, which often determines where surrounding physical objects are located, and then formulates a digital model to determine the appropriate output. In the case of Microsoft Hololens or Google Tango, specific cameras perform specific duties, such as depth sensing. Depth sensing cameras work in tandem with two “environment understanding cameras” on each side of the device.

- Projection:

While “Projection-Based Augmented Reality” is a category in itself, we are specifically referring to a miniature projector often found in a forward and outward-facing position on wearable augmented reality headsets. The projector can essentially turn any surface into an interactive environment. As mentioned above, the information is taken in by the cameras used to examine the surrounding world is processed and then projected onto a surface in front of the user; which could be a wrist, a wall, or even another person. In the future, you may not need an iPad to play an online game of chess because you will be able to play it on the tabletop in front of you.

- Processing:

Augmented reality devices are basically mini-supercomputers packed into mobile devices or in tiny wearable devices. These devices require significant computer processing power and utilize the components include a CPU, a GPU, flash memory, RAM, Bluetooth/Wifi microchip, global positioning system (GPS) microchip, and more. Advanced augmented reality devices, such as the Microsoft Hololens utilize an accelerometer (to measure the speed in which your head is moving), a gyroscope (to measure the tilt and orientation of your head), and a magnetometer (to function as a compass and figure out which direction your head is pointing) to provide for truly immersive experience.

- Reflection:

Mirrors are used in augmented reality HMD/Eyewear devices to assist with the way your eye views the virtual image. Some augmented reality devices may have “an array of many small curved mirrors” (as with the Magic Leap augmented reality device) and others may have a simple double-sided mirror with one surface reflecting incoming light to a side-mounted camera and the other surface reflecting light from a side-mounted display to the user’s eye. In the Microsoft Hololens, the use of “mirrors” involves see-through holographic lenses (Microsoft refers to them as waveguides) that use an optical projection system to beam holograms into your eyes.

How Augmented Reality is Controlled (User Interaction)

Apart from our ordinary smartphones, other Augmented reality devices are often controlled either by touch a pad or voice commands. The touchpads are often somewhere on the device that is easily reachable. They work by sensing the pressure changes that occur when a user taps or swipes a specific spot. Voice commands work very similarly to the way they do on our smartphones. A tiny microphone on the device will pick up your voice and then a microprocessor will interpret the commands. Voice commands, such as those on the ReaWear HMT, are preprogrammed from a list of commands that you can use.

AR devices like Microsoft HoloLens allow interaction through combined user input with gestures, gaze, and voice. Instead of moving the cursor with a mouse, you use your gaze and instead of clicking or tapping, you use hand gestures(input by tracking the position of hand that is visible to the device).

Time will pass, things may change completely. But we can be sure, that AR would find its place in the future everyday life of people.

Hi Sanket,

I have read your complete Article. It’s very useful and informative.

I also read something related to Augmented Reality, I think you should read and give your feedback on that.

https://www.raredevs.com/blog/guide-to-using-augmented-reality-in-your-app

Love to read it, keep sharing it.

Thanks Again.

Great job!

I would like to share some tips which personally helped me. I hope it can help your readers as well:

https://axisbits.com/blog/Top-5-Ideas-to-Use-Augmented-Reality-for-Marketing-and-Sales-in-Your-Business

https://www.youtube.com/watch?v=HprQbTlYHuQ

Check also those Quora topics too:

https://www.quora.com/What-is-augmented-reality-AR-and-how-does-it-work

https://www.quora.com/How-does-augmented-reality-work-and-what-is-the-impact-of-augmented-reality

Check an article on The Augmented Reality for Marketing and Sales https://axisbits.com/blog/Top-5-Ideas-to-Use-Augmented-Reality-for-Marketing-and-Sales-in-Your-Business

Pingback: What is the Cost to Develop an Augmented Reality mobile app?